Average of 10 Data Point Labview Continuous

What is Curve Fitting?

The purpose of curve fitting is to find a function f(x) in a function class Φ for the data (xi , yi ) where i=0, 1, 2,…, n–1. The function f(x) minimizes the residual under the weight W . The residual is the distance between the data samples and f(x). A smaller residual means a better fit. In geometry, curve fitting is a curve y=f(x) that fits the data (xi , yi ) where i=0, 1, 2,…, n–1.

In LabVIEW, you can use the following VIs to calculate the curve fitting function.

- Linear Fit VI

- Exponential Fit VI

- Power Fit VI

- Gaussian Peak Fit VI

- Logarithm Fit VI

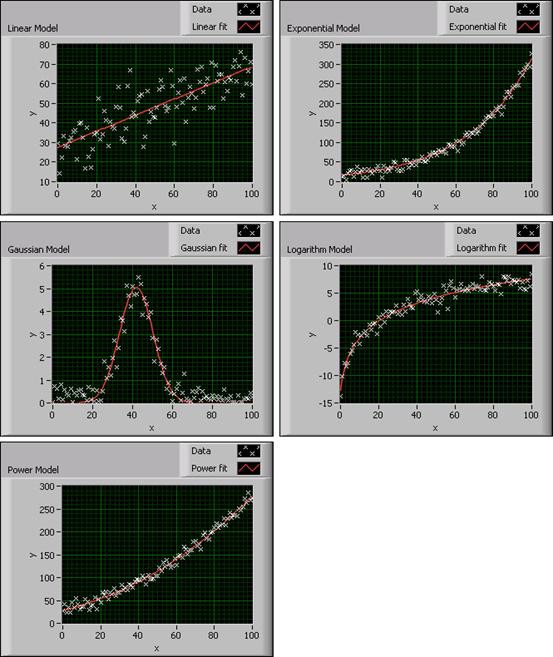

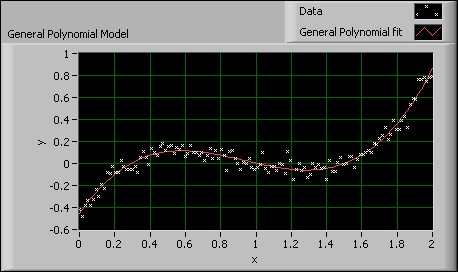

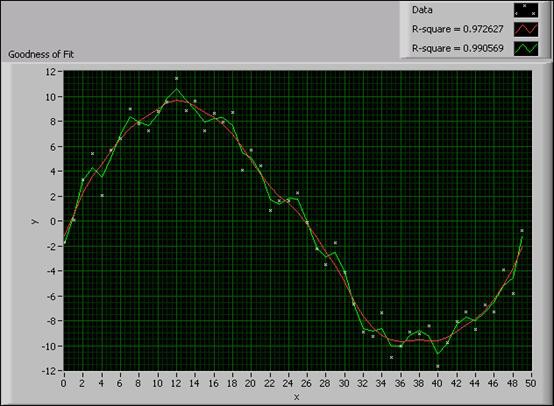

These VIs create different types of curve fitting models for the data set. Refer to the LabVIEW Help for information about using these VIs. The following graphs show the different types of fitting models you can create with LabVIEW.

Figure 1. Curve Fitting Models in LabVIEW

Before fitting the data set, you must decide which fitting model to use. An improper choice, for example, using a linear model to fit logarithmic data, leads to an incorrect fitting result or a result that inaccurately determines the characteristics of the data set. Therefore, you first must choose an appropriate fitting model based on the data distribution shape, and then judge if the model is suitable according to the result.

Every fitting model VI in LabVIEW has a Weight input. The Weight input default is 1, which means all data samples have the same influence on the fitting result. In some cases, outliers exist in the data set due to external factors such as noise. If you calculate the outliers at the same weight as the data samples, you risk a negative effect on the fitting result. Therefore, you can adjust the weight of the outliers, even set the weight to 0, to eliminate the negative influence.

You also can use the Curve Fitting Express VI in LabVIEW to develop a curve fitting application.

Curve Fitting Methods

Different fitting methods can evaluate the input data to find the curve fitting model parameters. Each method has its own criteria for evaluating the fitting residual in finding the fitted curve. By understanding the criteria for each method, you can choose the most appropriate method to apply to the data set and fit the curve. In LabVIEW, you can apply the Least Square (LS), Least Absolute Residual (LAR), or Bisquare fitting method to the Linear Fit, Exponential Fit, Power Fit, Gaussian Peak Fit, or Logarithm Fit VI to find the function f(x).

The LS method finds f(x) by minimizing the residual according to the following formula:

where n is the number of data samples

wi is the i th element of the array of weights for the data samples

f(x i ) is the i th element of the array of y-values of the fitted model

yi is the i th element of the data set (x i, y i)

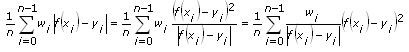

The LAR method finds f(x) by minimizing the residual according to the following formula:

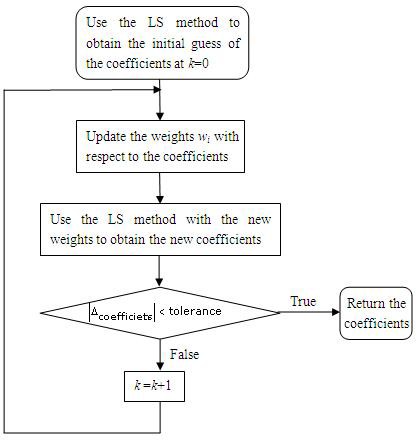

The Bisquare method finds f(x) by using an iterative process, as shown in the following flowchart, and calculates the residual by using the same formula as in the LS method. The Bisquare method calculates the data starting from iteration k.

Figure 2. Bisquare Method Flowchart

Because the LS, LAR, and Bisquare methods calculate f(x) differently, you want to choose the curve fitting method depending on the data set. For example, the LAR and Bisquare fitting methods are robust fitting methods. Use these methods if outliers exist in the data set. The following sections describe the LS, LAR, and Bisquare calculation methods in detail.

LS Method

The least square method begins with a linear equations solution.

Ax = b

A is a matrix and x and b are vectors. Ax – b represents the error of the equations.

The following equation represents the square of the error of the previous equation.

E(x) = ( Ax - b ) T ( Ax - b ) = x T A T Ax -2 b T Ax+ b T b

To minimize the square error E(x), calculate the derivative of the previous function and set the result to zero:

E'(x) = 0

2 A T Ax -2 A T b = 0

A T Ax = A T b

x = ( A T A )-1 A T b

From the algorithm flow, you can see the efficiency of the calculation process, because the process is not iterative. Applications demanding efficiency can use this calculation process.

The LS method calculates x by minimizing the square error and processing data that has Gaussian-distributed noise. If the noise is not Gaussian-distributed, for example, if the data contains outliers, the LS method is not suitable. You can use another method, such as the LAR or Bisquare method, to process data containing non-Gaussian-distributed noise.

LAR Method

The LAR method minimizes the residual according to the following formula:

From the formula, you can see that the LAR method is an LS method with changing weights. If the data sample is far from f(x), the weight is set relatively lower after each iteration so that this data sample has less negative influence on the fitting result. Therefore, the LAR method is suitable for data with outliers.

Bisquare Method

Like the LAR method, the Bisquare method also uses iteration to modify the weights of data samples. In most cases, the Bisquare method is less sensitive to outliers than the LAR method.

Comparing the Curve Fitting Methods

If you compare the three curve fitting methods, the LAR and Bisquare methods decrease the influence of outliers by adjusting the weight of each data sample using an iterative process. Unfortunately, adjusting the weight of each data sample also decreases the efficiency of the LAR and Bisquare methods.

To better compare the three methods, examine the following experiment. Use the three methods to fit the same data set: a linear model containing 50 data samples with noise. The following table shows the computation times for each method:

Table 1. Processing Times for Three Fitting Methods

| Fitting method | LS | LAR | Bisquare |

| Time(μs) | 3.5 | 30 | 60 |

As you can see from the previous table, the LS method has the highest efficiency.

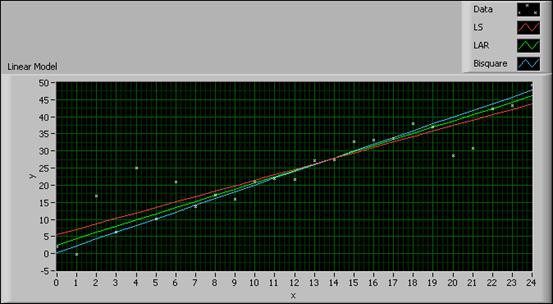

The following figure shows the influence of outliers on the three methods:

Figure 3. Comparison among Three Fitting Methods

The data samples far from the fitted curves are outliers. In the previous figure, you can regard the data samples at (2, 17), (20, 29), and (21, 31) as outliers. The results indicate the outliers have a greater influence on the LS method than on the LAR and Bisquare methods.

From the previous experiment, you can see that when choosing an appropriate fitting method, you must take both data quality and calculation efficiency into consideration.

LabVIEW Curve Fitting Models

In addition to the Linear Fit, Exponential Fit, Gaussian Peak Fit, Logarithm Fit, and Power Fit VIs, you also can use the following VIs to calculate the curve fitting function.

- General Polynomial VI

- General Linear Fit VI

- Cubic Spline Fit VI

- Nonlinear Curve Fit VI

General Polynomial Fit

The General Polynomial Fit VI fits the data set to a polynomial function of the general form:

f(x) = a + bx + cx 2 + …

The following figure shows a General Polynomial curve fit using a third order polynomial to find the real zeroes of a data set. You can see that the zeroes occur at approximately (0.3, 0), (1, 0), and (1.5, 0).

Figure 4. General Polynomial Model

This VI calculates the mean square error (MSE) using the following equation:

When you use the General Polynomial Fit VI, you first need to set the Polynomial Order input. A high Polynomial Order does not guarantee a better fitting result and can cause oscillation. A tenth order polynomial or lower can satisfy most applications. The Polynomial Order default is 2.

This VI has a Coefficient Constraint input. You can set this input if you know the exact values of the polynomial coefficients. By setting this input, the VI calculates a result closer to the true value.

General Linear Fit

The General Linear Fit VI fits the data set according to the following equation:

y = a 0 + a 1 f 1(x) + a 2 f 2(x) + …+ak-1 f k-1(x)

where y is a linear combination of the coefficients a 0, a 1, a 2, …, a k-1 and k is the number of coefficients.

The following equations show you how to extend the concept of a linear combination of coefficients so that the multiplier for a 1 is some function of x.

y = a 0 + a 1sin(ωx)

y = a 0 + a 1 x 2

y = a 0 + a 1cos(ωx 2)

where ω is the angular frequency.

In each of the previous equations, y is a linear combination of the coefficients a 0 and a 1. For the General Linear Fit VI, y also can be a linear combination of several coefficients. Each coefficient has a multiplier of some function of x. Therefore, you can use the General Linear Fit VI to calculate and represent the coefficients of the functional models as linear combinations of the coefficients.

y = a 0 + a 1sin(ωx)

y = a 0 + a 1 x 2 + a 2cos(ωx 2)

y = a 0 + a 1(3sin(ωx)) + a 2 x 3 + (a 3/x) + …

In each of the previous equations, y can be both a linear function of the coefficients a 0, a 1, a 2,…, and a nonlinear function of x.

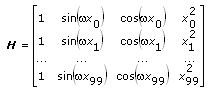

Building the Observation Matrix

When you use the General Linear Fit VI, you must build the observation matrix H . For example, the following equation defines a model using data from a transducer.

y = a 0 + a 1sin(ωx) + a 2cos(ωx) + a 3 x 2

The following table shows the multipliers for the coefficients, aj , in the previous equation.

| Coefficient | Multiplier |

| a o | 1 |

| a 1 | sin(ωx) |

| a 2 | cos(ωx) |

| a 3 | x2 |

To build the observation matrix H , each column value in H equals the independent function, or multiplier, evaluated at each x value, x i . The following equation defines the observation matrix H for a data set containing 100 x values using the previous equation.

If the data set contains n data points and k coefficients for the coefficient a 0, a 1, …, ak – 1, then H is an n × k observation matrix. Therefore, the number of rows in H equals the number of data points, n. The number of columns in H equals the number of coefficients, k.

To obtain the coefficients, a 0, a 1, …, ak – 1, the General Linear Fit VI solves the following linear equation:

H a = y

where a = [a 0 a 1 … ak – 1] T and y = [y 0 y 1 … yn – 1] T .

Cubic Spline Fit

A spline is a piecewise polynomial function for interpolating and smoothing. In curve fitting, splines approximate complex shapes.

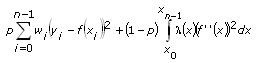

The Cubic Spline Fit VI fits the data set (x i, y i) by minimizing the following function:

where p is the balance parameter

wi is the i th element of the array of weights for the data set

yi is the i th element of the data set (x i, y i)

xi is the i th element of the data set (x i, y i)

f"(x) is the second order derivative of the cubic spline function, f(x)

λ(x) is the piecewise constant function:

where λ i is the i th element of the Smoothness input of the VI.

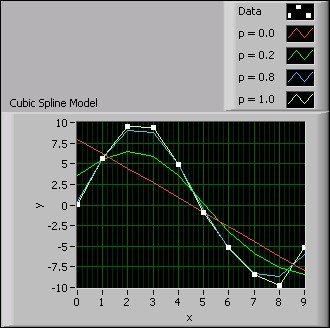

If the Balance Parameter input p is 0, the cubic spline model is equivalent to a linear model. If the Balance Parameter input p is 1, the fitting method is equivalent to cubic spline interpolation. p must fall in the range [0, 1] to make the fitted curve both close to the observations and smooth. The closer p is to 0, the smoother the fitted curve. The closer p is to 1, the closer the fitted curve is to the observations. The following figure shows the fitting results when p takes different values.

Figure 5. Cubic Spline Model

You can see from the previous figure that when p equals 1.0, the fitted curve is closest to the observation data. When p equals 0.0, the fitted curve is the smoothest, but the curve does not intercept at any data points.

Nonlinear Curve Fit

The Nonlinear Curve Fit VI fits data to the curve using the nonlinear Levenberg-Marquardt method according to the following equation:

y = f(x; a 0, a 1, a 2, …, ak )

where a 0, a 1, a 2, …, a k are the coefficients and k is the number of coefficients.

The nonlinear Levenberg-Marquardt method is the most general curve fitting method and does not require y to have a linear relationship with a 0, a 1, a 2, …, a k. You can use the nonlinear Levenberg-Marquardt method to fit linear or nonlinear curves. However, the most common application of the method is to fit a nonlinear curve, because the general linear fit method is better for linear curve fitting.

LabVIEW also provides the Constrained Nonlinear Curve Fit VI to fit a nonlinear curve with constraints. You can set the upper and lower limits of each fitting parameter based on prior knowledge about the data set to obtain a better fitting result.

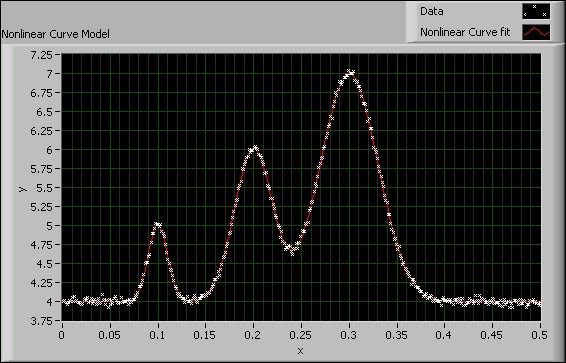

The following figure shows the use of the Nonlinear Curve Fit VI on a data set. The nonlinear nature of the data set is appropriate for applying the Levenberg-Marquardt method.

Figure 6. Nonlinear Curve Model

Preprocessing

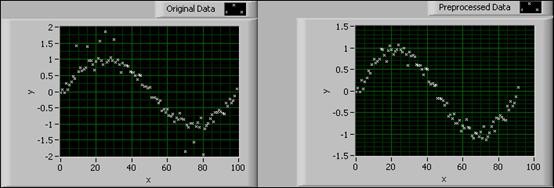

The Remove Outliers VI preprocesses the data set by removing data points that fall outside of a range. The VI eliminates the influence of outliers on the objective function. The following figure shows a data set before and after the application of the Remove Outliers VI.

Figure 7. Remove Outliers VI

In the previous figure, the graph on the left shows the original data set with the existence of outliers. The graph on the right shows the preprocessed data after removing the outliers.

You also can remove the outliers that fall within the array indices you specify.

Some data sets demand a higher degree of preprocessing. A median filter preprocessing tool is useful for both removing the outliers and smoothing out data.

Postprocessing

LabVIEW offers VIs to evaluate the data results after performing curve fitting. These VIs can determine the accuracy of the curve fitting results and calculate the confidence and prediction intervals in a series of measurements.

Goodness of Fit

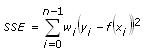

The Goodness of Fit VI evaluates the fitting result and calculates the sum of squares error (SSE), R-square error (R2), and root mean squared error (RMSE) based on the fitting result. These three statistical parameters describe how well the fitted model matches the original data set. The following equations describe the SSE and RMSE, respectively.

where DOF is the degree of freedom.

The SSE and RMSE reflect the influence of random factors and show the difference between the data set and the fitted model.

The following equation describes R-square:

where SST is the total sum of squares according to the following equation:

R-square is a quantitative representation of the fitting level. A high R-square means a better fit between the fitting model and the data set. Because R-square is a fractional representation of the SSE and SST, the value must be between 0 and 1.

0 ≤ R-square ≤ 1

When the data samples exactly fit on the fitted curve, SSE equals 0 and R-square equals 1. When some of the data samples are outside of the fitted curve, SSE is greater than 0 and R-square is less than 1. Because R-square is normalized, the closer the R-square is to 1, the higher the fitting level and the less smooth the curve.

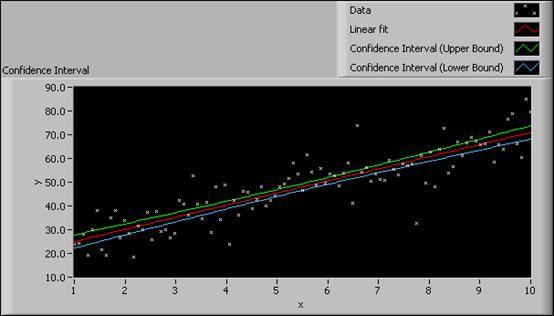

The following figure shows the fitted curves of a data set with different R-square results.

Figure 8. Fitting Results with Different R-Square Values

You can see from the previous figure that the fitted curve with R-square equal to 0.99 fits the data set more closely but is less smooth than the fitted curve with R-square equal to 0.97.

Confidence Interval and Prediction Interval

In the real-world testing and measurement process, as data samples from each experiment in a series of experiments differ due to measurement error, the fitting results also differ. For example, if the measurement error does not correlate and distributes normally among all experiments, you can use the confidence interval to estimate the uncertainty of the fitting parameters. You also can use the prediction interval to estimate the uncertainty of the dependent values of the data set.

For example, you have the sample set (x 0, y 0), (x 1, y 1), …, (xn -1, yn -1) for the linear fit function y = a 0 x + a 1. For each data sample, (xi , yi ), the variance of the measurement error,, is specified by the weight,

You can use the function form x = ( A T A )-1 A Tb of the LS method to fit the data according to the following equation.

where a = [a0 a1 ] T

y = [y 0 y 1 … yn-1 ] T

You can rewrite the covariance matrix of parameters, a 0 and a 1, as the following equation.

where J is the Jacobean matrix

m is the number of parameters

n is the number of data samples

In the previous equation, the number of parameters, m, equals 2. The ith diagonal element of C , Cii , is the variance of the parameter ai , .

The confidence interval estimates the uncertainty of the fitting parameters at a certain confidence level . For example, a 95% confidence interval means that the true value of the fitting parameter has a 95% probability of falling within the confidence interval. The confidence interval of the ith fitting parameter is:

where is the Student's t inverse cumulative distribution function of n–m degrees of freedom at probability

and

is the standard deviation of the parameter ai and equals

.

You also can estimate the confidence interval of each data sample at a certain confidence level . For example, a 95% confidence interval of a sample means that the true value of the sample has a 95% probability of falling within the confidence interval. The confidence interval of the ith data sample is:

where diagi ( A ) denotes the ith diagonal element of matrix A . In the above formula, the matrix (JCJ) T represents matrix A.

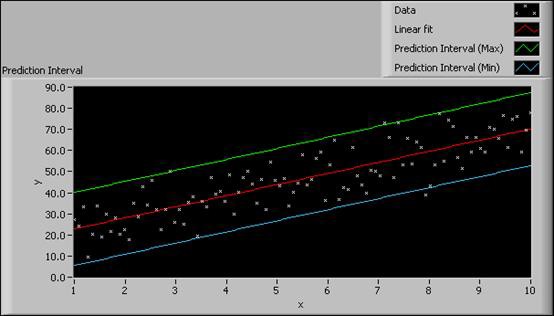

The prediction interval estimates the uncertainty of the data samples in the subsequent measurement experiment at a certain confidence level . For example, a 95% prediction interval means that the data sample has a 95% probability of falling within the prediction interval in the next measurement experiment. Because the prediction interval reflects not only the uncertainty of the true value, but also the uncertainty of the next measurement, the prediction interval is wider than the confidence interval. The prediction interval of the ith sample is:

LabVIEW provides VIs to calculate the confidence interval and prediction interval of the common curve fitting models, such as the linear fit, exponential fit, Gaussian peak fit, logarithm fit, and power fit models. These VIs calculate the upper and lower bounds of the confidence interval or prediction interval according to the confidence level you set.

The following figure shows examples of the Confidence Interval graph and the Prediction Interval graph, respectively, for the same data set.

Figure 9. Confidence Interval and Prediction Interval

From the Confidence Interval graph, you can see that the confidence interval is narrow. A small confidence interval indicates a fitted curve that is close to the real curve. From the Prediction Interval graph, you can conclude that each data sample in the next measurement experiment will have a 95% chance of falling within the prediction interval.

matherlypegare1980.blogspot.com

Source: https://www.ni.com/en-us/innovations/white-papers/08/overview-of-curve-fitting-models-and-methods-in-labview.html

0 Response to "Average of 10 Data Point Labview Continuous"

Post a Comment